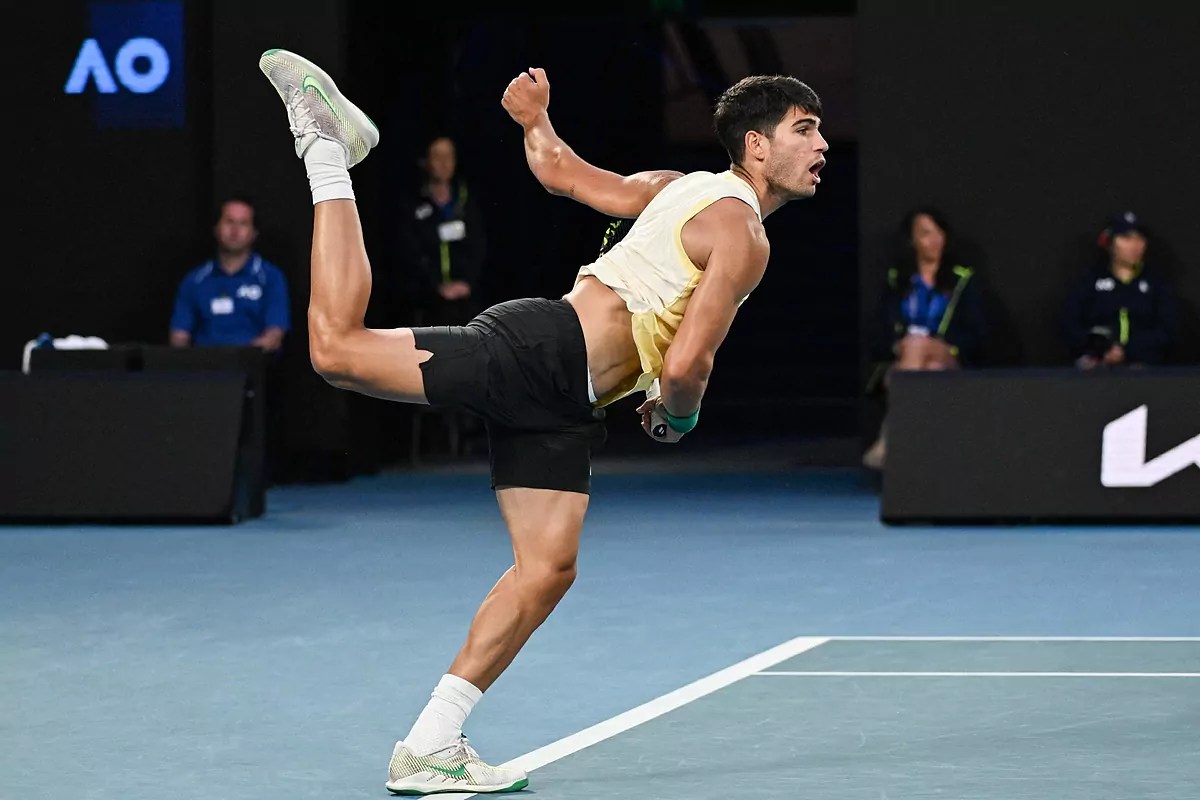

Martin Landaluce, Rafael Jodar, Carlos Alcaraz. Lorenzo Musetti, Luciano Darderi, Jannik Sinner. It seems like the conveyor belt of young players from Spain and Italy never stops. At some point, it stops being a coincidence.

When countries repeatedly produce not just elite players, but wave after wave of professionals, there is usually something structural underneath it.

A lot of the discussion around player development focuses on “clean” technique, fitness, mental maturity, and coaching philosophies. All of those things matter. But I think one of the biggest advantages Spain and Italy have is much simpler:

Their players grow up inside systems built around constant competition.

The Hidden Advantage

In 2026, Spain will host 31 Futures tournaments (Futures are the lowest rung of professional tennis, where players earn their first ATP points). Italy will host 23.

Similarly, just from the beginning of 2026 until the end of July – so seven months – Spain will host 9 Challenger events (the level just below the ATP Tour events most people see on TV), and Italy will host 14(!!).

Canada is nowhere near that level. As of right now, there are 3 Futures tournament scheduled in 2026, and 3 Challengers between now and July.

The exact number of tournaments might change a little from year to year, but the difference is massive.

And that difference changes development.

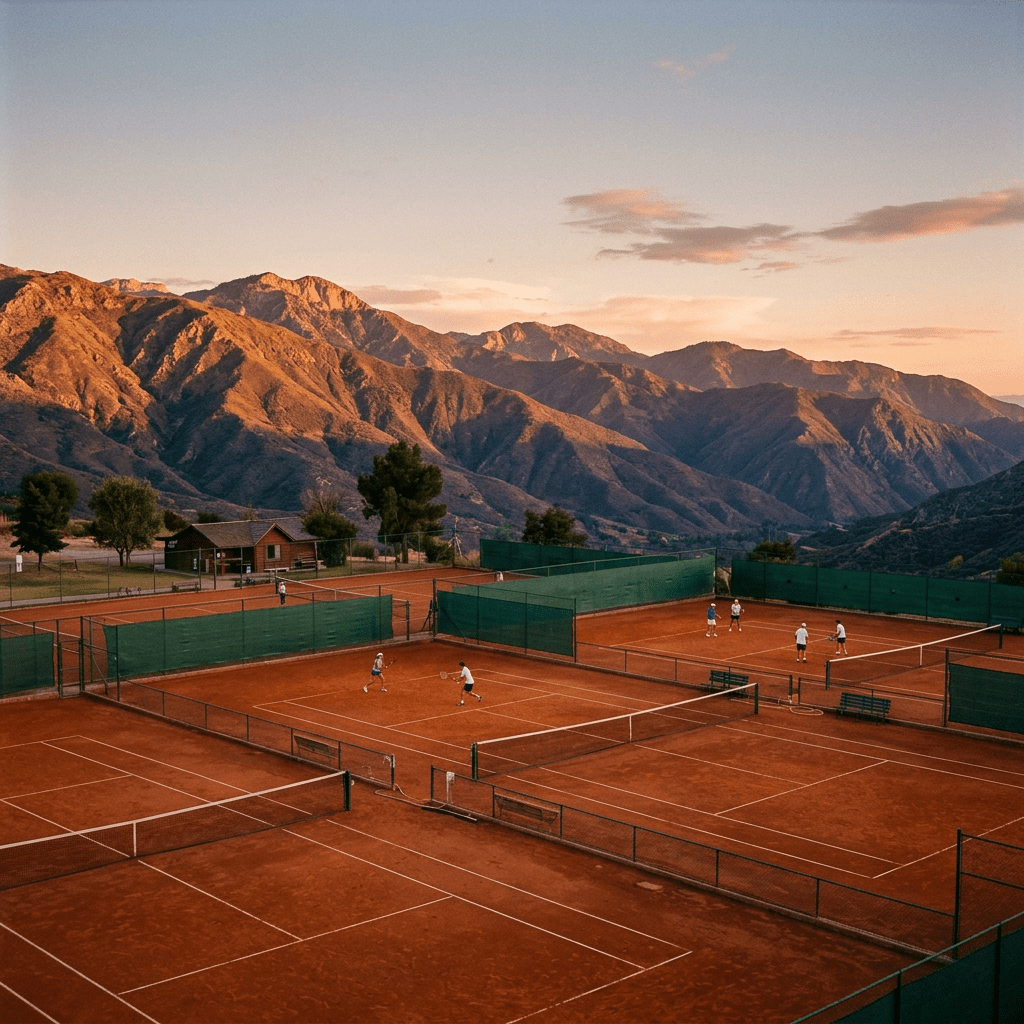

A player based in Spain or Italy can lose the first round one week, drive a few hours, and compete again next week, often on the same surface.

That creates a completely different developmental environment than what most Canadian players experience.

Competing professionally becomes normal instead of exceptional.

In Spain or Italy, a young player may personally know dozens of others trying to make it professionally. Futures and Challengers are not some distant level you occasionally hear about. They are part of the local tennis ecosystem.

In Canada, most aspiring pros probably know only a handful of players attempting that path seriously.

Why This Matters So Much

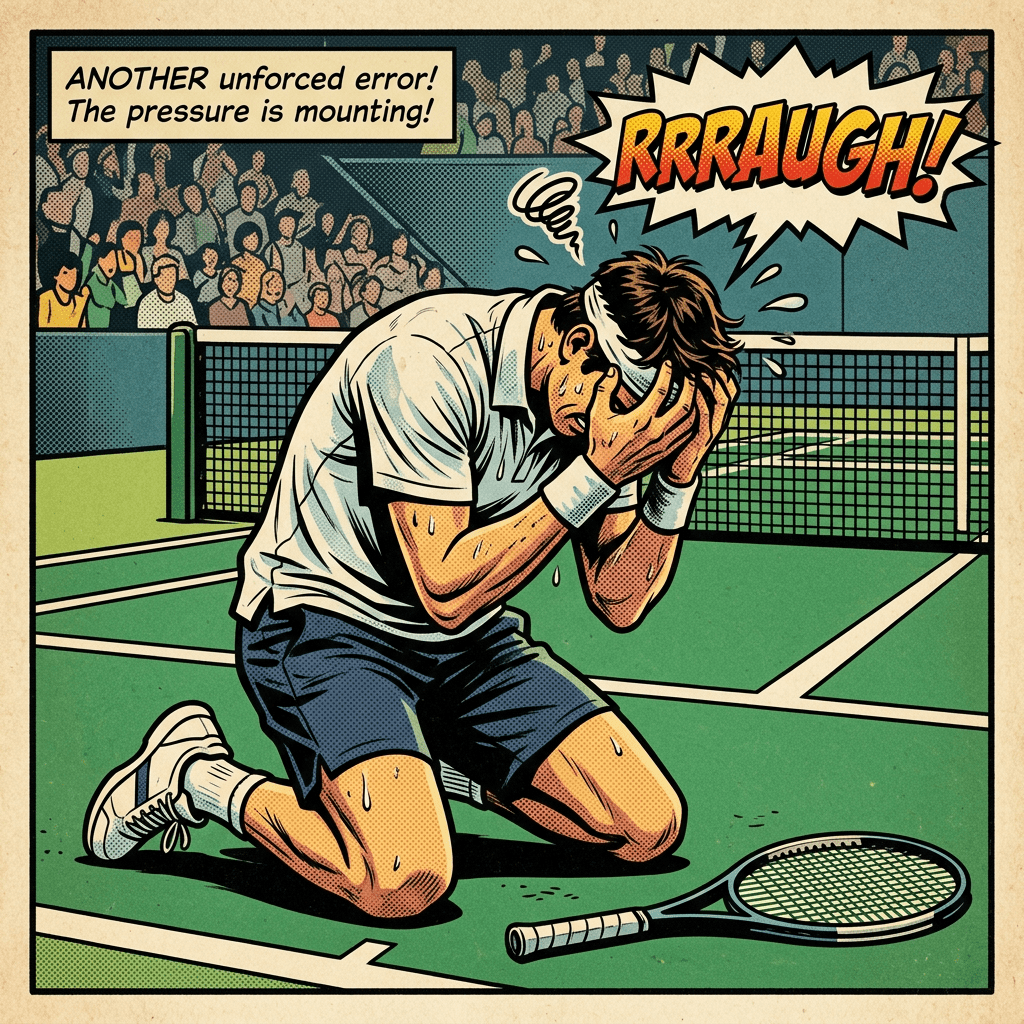

A lot of improvement in tennis does not happen in practice. Matches force players to solve problems, regulate emotions, figure out tactical adjustments, and manage pressure. Those things develop fastest through repetition in real competitive environments.

Not through another controlled practice set.

Real competition exposes weaknesses quickly. And when players compete every week, they get faster feedback loops.

Europe Allows Players to Stay Inside the System

One of the biggest advantages in Spain and Italy is not just tournament quantity. It is the ability to stay inside the ecosystem consistently, and simply continue competing.

A player can build long stretches of competition with:

- minimal flights

- lower travel costs

- less disruption to training

- familiar surfaces and conditions

For example, Martin Landaluce played his first pro event in October 2022. Between October 2022 and January 1st, 2024, he played 29 events. Out of those 29, 19 were in Spain. A few others were in Portugal or France, neighbouring countries. He was able to get from being unranked, to #450 ATP, while not having to travel prohibitively, control his expenses, and compete in clubs he’s probably been to a few times before.

A Canadian player often has to spend heavily just to access enough professional-level matches: flights, hotels, rental cars, coaching expenses (if those are even realistic), and weeks away from home.

Eventually, every tournament starts feeling financially important. Every loss matters a little more. That changes development too.

The Canadian Challenge

Canada has absolutely produced world-class players. Milos Raonic, Denis Shapovalov and Félix Auger-Aliassime proved that.

The issue is not talent, or inferior coaching. The issue is density. There are simply fewer opportunities for Canadian players to compete regularly, build ranking points gradually, and develop through volume of meaningful matches.

Canadian players often have to become international much earlier in their careers. That creates higher costs, more logistical stress, less continuity, and more pressure attached to results.

And for many players, that pressure arrives before they are fully ready for it.

Why Competitive Density Helps Late Developers

Dense competition ecosystems allow players more time; not every player is physically or mentally ready to turn pro at 18.

If college tennis maybe isn’t an option, but when there are constant professional opportunities nearby, players can survive longer while continuing to improve.

In thinner systems, players often disappear earlier because the economics and logistics become unsustainable. Some late bloomers are just players who were given the time to mature, find their game, and survived long enough to develop.

Final Thought

I do not think the success of Italian and Spanish tennis is just about coaching quality or talent identification.

Those countries have built ecosystems where competition is:

- accessible

- frequent

- sustainable

And over time, that compounds. Players improve fastest when competition becomes part of normal life instead of an expensive event that happens occasionally. That may be one of the biggest advantages in modern player development.